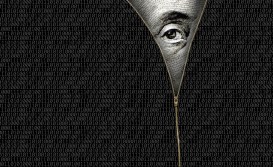

Ghost breaches: How AI-mediated narratives have become a new threat vector

A company wakes up to a news story claiming it has suffered a major data breach. The details are specific, technical and convincing. But the breach didn’t happen. No systems were compromised. No data was taken. A language model generated the entire story, filling in plausible details from scratch. And before the company can figure out what’s going on, a reporter at a reputable outlet picks up the story and requests comment. Within hours, the company is drafting statements and mobilizing its communications team to address a fictional event.

A second incident begins with something real. Years earlier, a company had suffered a genuine breach that received wide media coverage. The incident was investigated, resolved and closed. Then one of the outlets that originally reported on it redesigned its website. Old articles received new URLs and updated timestamps, and search engines re-indexed them as fresh content. AI-powered news aggregators picked up the signal and flagged it as a developing story. The company found itself fielding inquiries about an incident that had been resolved years before.

[Ed. note: The authors are withholding full specifics about the incidents because full disclosure could cause harm, yet CyberScoop confirmed with the authors that the incidents did in fact take place].

A third incident introduces yet another dimension. A cybersecurity publication ran a story about a business email compromise attack that cost a UK company close to a billion pounds. The article quoted a well-known security researcher, yet in reality, he had not spoken to the publication. AI generated the quotes, assigned them to him with full confidence, and the publication ran them as fact.

Together, these three cases expose a threat that most organizations have yet to prepare for. AI has developed the ability to fabricate convincing security incidents from nothing, complete with technical detail, named sources, and enough credibility to trigger full-scale crisis responses. Any organization that treats this as a distant or theoretical problem risks learning the hard way just how fast AI-generated fiction can become a real-world emergency.

The assumption that no longer holds

Cyber crisis response has always been built on a simple premise: something real happens, then you respond. That premise is breaking. AI systems now generate, amplify, and validate claims before security teams have confirmed anything. Once a narrative enters the ecosystem, it can be ingested into threat intelligence feeds, risk scoring platforms, and automated workflows. Fiction becomes signal.

For security teams, this creates a new class of false positive. Not a noisy alert from a misconfigured tool, but a fully formed external narrative that appears credible. A hallucinated breach can trigger internal investigations, executive escalation, and defensive actions. Time and resources get diverted toward disproving something that never happened.

Worse, it can influence real attacker behavior. Threat actors can weaponize fabricated breach narratives as pretext. Phishing emails referencing a “known incident” become more believable. Impersonation of IT or incident response teams becomes more effective. The narrative becomes part of the attack surface.

What this means for security teams

Security teams are used to monitoring for indicators of compromise. They now need to monitor for indicators of narrative. Open source intelligence pipelines are increasingly automated. If those pipelines ingest false information, downstream systems will act on it. That includes SIEM enrichment, third-party risk scoring, and even automated containment decisions in some environments.

The practical implication is that security teams need visibility into how their organization is being represented externally, not just what is happening internally. This is not traditional threat intelligence, but it behaves like it. Early detection changes outcomes.

There is also a need for tighter integration with communications. When a false narrative emerges, the technical reality and the external perception diverge. Both need to be managed in parallel.

What this means for communications teams

For communications teams, the timeline has collapsed. The first signal of a “breach” may not come from the SOC. It may come from a journalist, a customer, or an automated alert.

Silence is no longer neutral. If a narrative exists, AI systems will fill gaps with whatever information is available. That can reinforce inaccuracies with each iteration. Responses need to be designed for machine consumption as well as human audiences. Clear, declarative language. Verifiable facts. Structured statements that can be easily parsed and reused. The goal is to establish a competitive presence in the information supply chain.

Preparation becomes critical. Pre-approved language that can be deployed quickly. Established coordination with legal and security before something surfaces.

Shared implications

Both security and communications teams are now operating in the same environment, whether they recognize it or not. A hallucinated breach can trigger real operational disruption. Vendor relationships may be paused, connections to third-party systems may be severed, regulators may take interest, and markets may react. None of that requires an actual compromise. And this creates a feedback loop. External narratives drive internal actions. Internal actions, if visible, reinforce external narratives.

Breaking that loop requires speed, coordination, and clarity.

AI audits as a control mechanism

One of the most effective controls in this new environment is systematic AI auditing. Regularly testing how AI systems describe your organization, your security posture, and any alleged incidents. This provides visibility into what machines “believe” before that belief spreads. It allows organizations to identify and correct false narratives early, before they propagate into tooling, decision-making, and attacker behavior. It also highlights where accurate information needs to exist. Not just anywhere online, but in sources that AI systems prioritize.

The mindset shift

This marks a shift from incident response to narrative response. Security teams need to treat every alert as potentially fabricated. Communications teams need to prepare for narratives that form independently of what actually happened. Both must operate with the understanding that perception alone can trigger real consequences. In this environment, the ability to detect and respond to false narratives matters as much as the ability to detect and respond to actual breaches.

Mary Catherine Sullivan, who holds a Ph.D. in political science from Vanderbilt University, is a senior director of Data Science for Digital & Insights, within FTI’s Strategic Communications segment. She is a communications and data science leader specializing in message testing, audience research, digital communications analytics, and reputational risk assessment. As part of FTI Consulting’s Data Science team, she develops state-of-the-art artificial intelligence, natural language processing, machine learning, and statistical models to analyze media ecosystems, stakeholder discourse, and audience response—supporting informed, defensible decision-making for clients navigating complex reputational environments.

Brett Callow is a senior advisor in the Cybersecurity and Data Privacy Communications at FTI Consulting. With more than two decades of cybersecurity policy and legislation understanding and extensive cybersecurity communications experience, Brett’s expertise is widely recognized within the industry, by policy makers and the media. He has been involved in some of the most high-profile ransomware incidents and has participated in panels and policy-related discussions, including at the Office of the Director of National Intelligence and the Aspen Institute, and has served on the Advisory Board of the Royal United Services Institute’s Ransomware Harms project.