How sloppy OPSEC gave researchers an inside look at the exploit industry

The companies that make advanced surveillance software are quiet by design. They generate enough press to let the market (i.e., governments) know their products exist, but it’s not as if there’s an app store for mobile spyware.

They do make mistakes, though. And thanks to two researchers from Lookout, the public now has more information on how these companies operate.

In the course of investigating a new kind of Android-focused mobile malware, Lookout’s Andrew Blaich and Michael Flossman uncovered text conversations among members of a nation-state’s surveillance program. Those files, which were stored on a server that was part of the malware’s command-and-control infrastructure, represented a trove of insight about how much money the particular government budgeted for its program, whether its spies decided to buy exploits or build their own, and why it’s easier than ever for countries to leverage surveillance technology.

It started when Blaich and Flossman were analyzing how a single malware sample had manipulated data within the popular messaging service WhatsApp. While doing that work, researchers discovered approximately 20 servers that were being used to support various campaigns, including a cached database of information collected by the manipulated app.

However, the collected information didn’t appear to be coming from the supposed targets of the malware. Instead, researchers soon discovered, the messages were from people working on a surveillance program on behalf of the government. Those government developers were testing out the WhatsApp malware on their own devices, and it was storing their discussions on the program’s servers.

The nation-state essentially had hacked itself and accidentally dumped highly sensitive information on the open internet — including details of its interactions with the secretive vendors who sell spyware to governments.

“Once we did some initial mapping out of the infrastructure, we noticed that this particular adversary had made some operational security mistakes in terms of the configuration on this server,” Flossman told CyberScoop. “We’ve been like following a trail of breadcrumbs and slowly piecing together information about how this particular actor has operated, and in the process, unraveling their operations.”

The vendors mentioned in the conversations are a who’s who of the industry: The nation-state had been in touch with Expert Team, FinFisher, IPS, NSO Group, Ozeda Group, Palantir, Verint, Wintego, and Wolf Intelligence regarding the program, Blaich and Flossman said during a Jan. 19 presentation of their findings at Shmoocon, an information security conference based in Washington, D.C.

Lookout did not release what particular nation-state the research was attached to, telling CyberScoop it will release the culprit in the future.

What malware works for you?

Beyond the vendor information, the exposed chats referenced the nation-state’s program’s budget, resourcing, desired implant capabilities, its need for exploits and the viable attack vectors. The researchers said during their presentation that not every company the nation-state spoke with was selling exploits. Rather, the conversations point to how government spies use other forms of technology that go beyond the exploits themselves.

“[The nation-state] had a call-to-action to look at all the types of different things that you would use to enhance and create a cyber surveillance program,” Blaich told CyberScoop. “Talk about desktop exploits, talk about mobile exploits, but also being interested in creating and enhancing their ability to do [open-source intelligence work], being able to process and ingest social media feeds and examine all that type of work. There’s also in some cases they’re looking at companies that are doing drone research. They are looking at the entire spectrum of everything.”

Researchers were also able to see some of the sales pitches various exploit companies made in response to this nation-state’s inquiries.

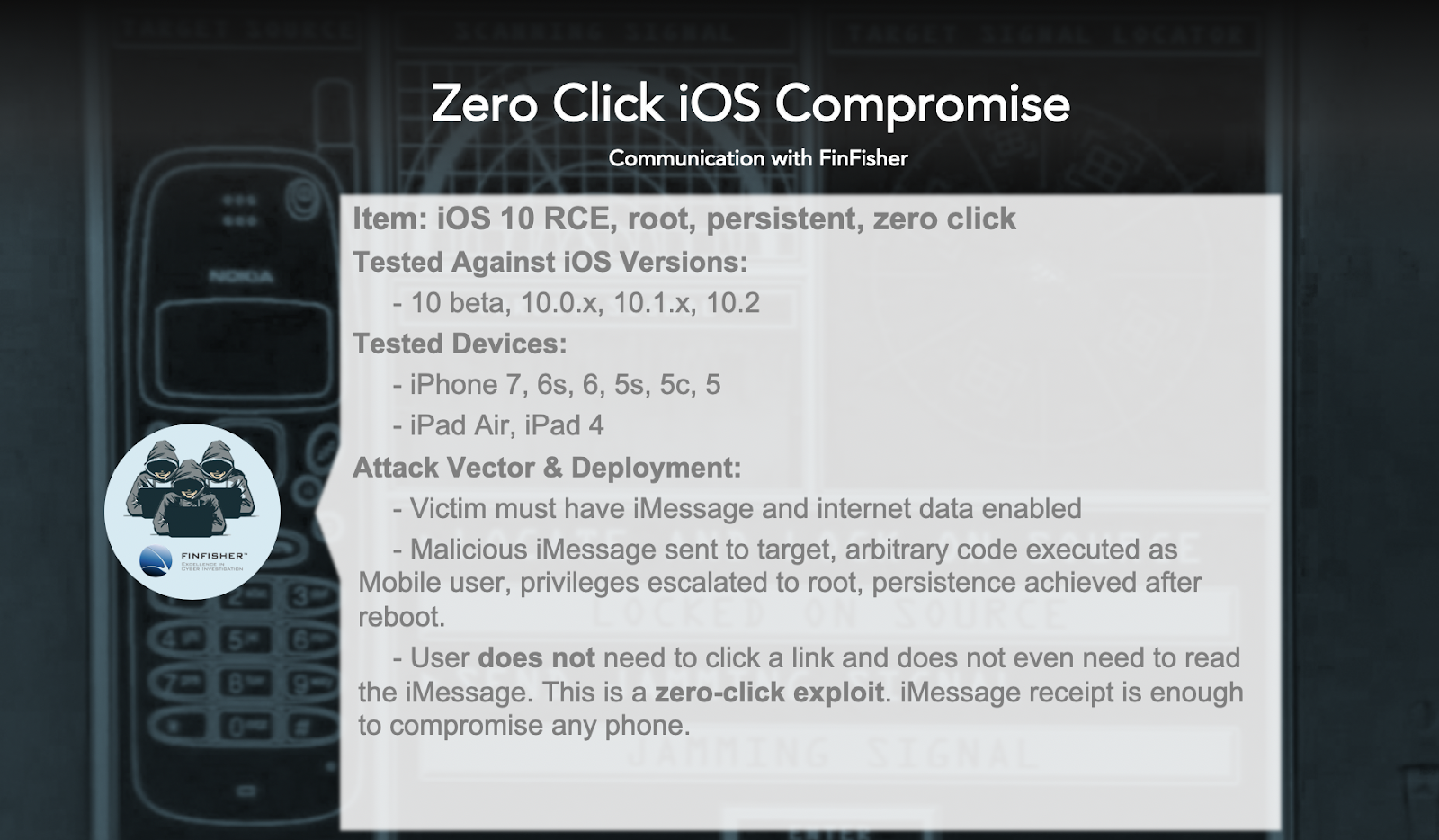

FinFisher, known for its FinSpy exploits, offered to sell the nation-state a zero-click iOS compromise that will give an attacker root access to a device. The exploit would work in iOS versions up to 10.2, the latest version of the operating system at the time in which Blaich and Flossman conducted their research.

A description of an exploit FinFisher wanted to sell to a particular nation-state. (Lookout)

NSO Group, the Israeli company behind the infamous Pegasus malware, offered up an Android exploit that would have taken advantage of an Adobe Flash zero-day. Delivered via SMS, the exploit forces the device’s default browser to connect to an attacker’s infrastructure.

Another company, Arity Business Inc., offered an array of exploits at a number of different price points. Researchers were intrigued by Arity, as it has no public-facing website. During their presentation, the Lookout researchers said they had never heard of the company prior to this instance.

Among the exploits Arity offered:

-

- An Android-focused exploit would have taken advantage of the Stagefright vulnerability, sending an MMS with a weaponized video file that would have bypassed a memory protection process in Android known as ASLR. The exploit, being sold for $90,000, would have allowed an attacker remote access to a device.

-

- An Adobe Flash zero-day that would have taken advantage of an action script vulnerability in Microsoft’s Internet Explorer/Edge, Mozilla’s Firefox, Google’s Chrome, Opera’s Windows and Linux browsers. Offered for a price of $65,000, it would have allowed for remote code execution on a desktop device.

-

- Another desktop-focused zero-day took advantage of a flaw in Microsoft Internet Explorer and Edge, allowing for code to be injected into a device remotely. The price for this particular exploit was $50,000.

Lookout researchers also found the the company offered a number of these exploits under a 40-day exclusivity window, and offered replacement exploits if the code didn’t work. The company also made the nation-state agree to “no stupid deployments” in exchange for the exploit. Normally meant for highly specified targets, researchers found that Arity once made a deal for an exploit, only to watch the nation-state attach it to a phishing attempt that was mass e-mailed to an enterprise.

Buy versus build

Despite this particular nation-state having a $23 million budget for the surveillance program, it decided to build its own exploits, Blaich and Flossman said.

“This group, like most other groups, actually functions like an engineering organization or any company where they have a budget,” Blaich told CyberScoop. “The primary goals that [the nation-state] wanted to achieve was they want be able to look at the the correspondence that happens in these messaging apps: WhatsApp, Viber, Telegram. … They’re examining, they’re demoing, they’re trialing, but then there’s some point they’re saying. ‘OK, for right now it makes more sense for us to build it.'”

What was eventually built was the WhatsApp application that Blaich and Flossman initially uncovered. To the user, the app looks and functions like the legitimate application. However, it’s true function sends the user’s messages back to the nation-state’s server, allowing whomever is running the program to parse through messages at will.

Additionally, researchers found a similar setup that focused on iOS, where apps made to look like popular iOS messaging platforms were also pointed to server controlled by the nation-state.

Blaich and Flossman said the apps were developed using a number of legitimate tools and methods that can be used for a number of purposes. In the case of the Android app, developers relied on sideloading, a process that allows apps to be installed on devices without going through the Google Play Store. In the iOS version, the nation-state’s developers relied on PPSideloader, a program that works with iPhones.

The process was not as sophisticated as some of the exploits that the private exploit sellers dangled. In order to sideload the malware, the nation-state either had to obtain physical access to the device or hope the user clicked on a phishing message.

Lookout has code-named the Android exploit “Barracuda.” The iOS version is code-named “Stonefish.”

A key takeaway from the research is ever-lowering barrier to entry for nation-states looking to obtain or build surveillance exploits. Lookout said its findings indicate that the market for exploits is growing. Enterprises in the public and private sectors face more risk as a result, the researchers said.

“While we see a lot of mobile surveillanceware being built and designed for use by nation-states, these tools could be commercialized to target the enterprise by non-nation states actors too,” a Lookout blog post reads. “As we continue to move toward a post-perimeter world, CISOs that do not prioritize mobile security may put their enterprises at risk, since these surveillance capabilities are not only widespread, but exist with different incentives and use cases from traditional security models.”